Data quality signals are what make AI context trustworthy. Learn how the data control layer keeps them current at scale.

Apr 22, 2026

5

min read

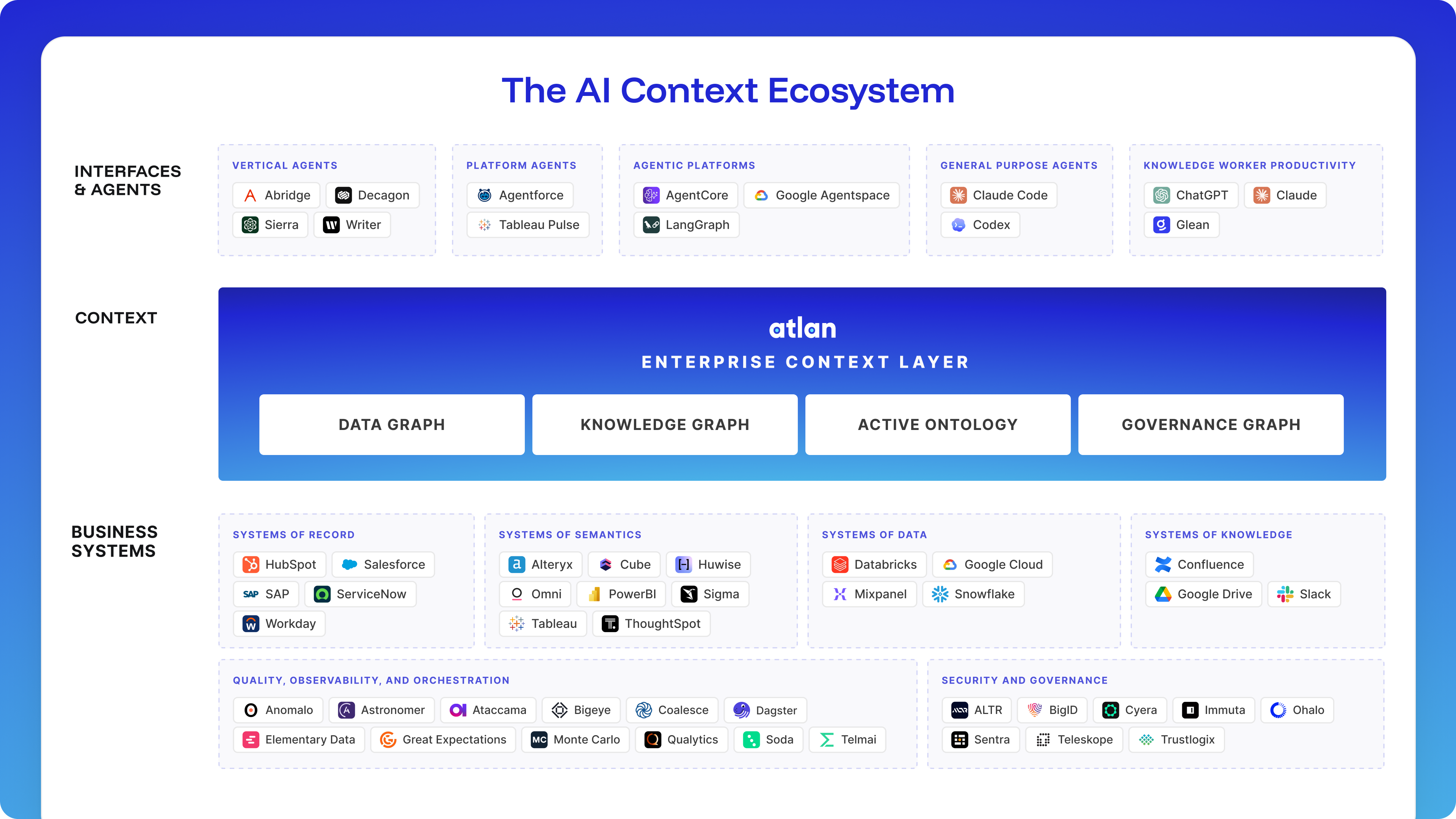

Every enterprise building toward production AI is investing in context infrastructure: lineage, semantic models, data quality signals, and governance policies. That investment is well-placed. The quality of what AI produces depends on the quality of the context it consumes. But most enterprises haven’t built the layer that connects them.

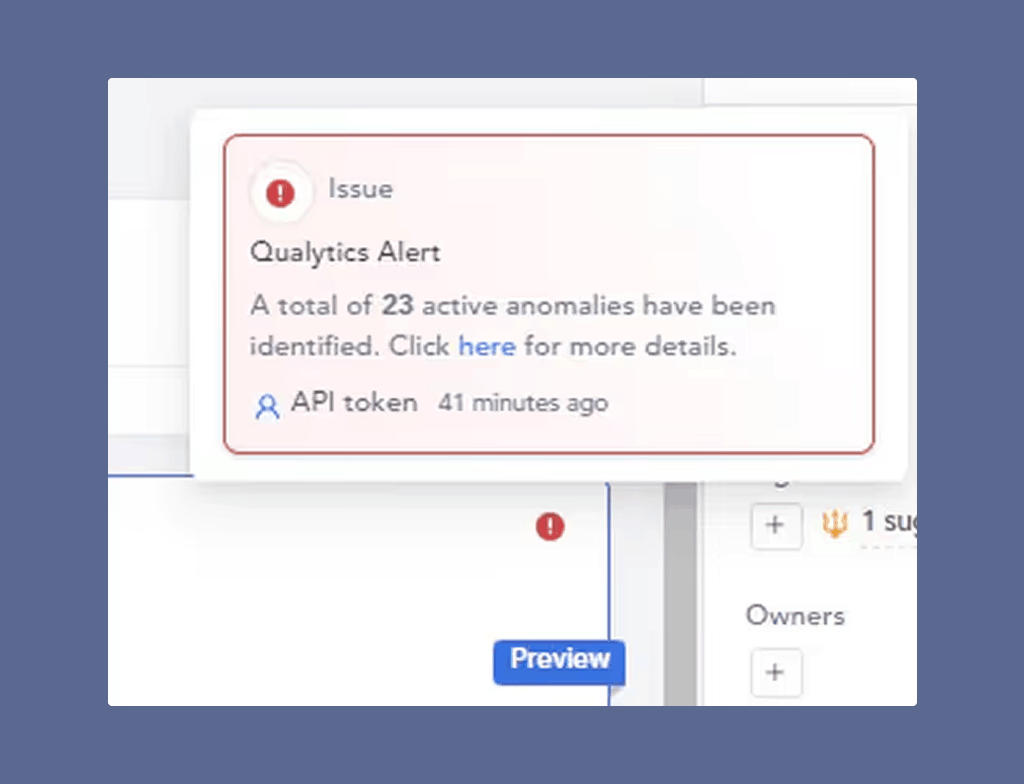

Many context layer architectures assume that the data flowing through them is reliable and the quality signals attached reflect its current state, not a snapshot from last week's scan. For most enterprises, that assumption doesn't hold. And when AI agents act on context built from stale or incomplete quality signals, the failure is confidently wrong decisions propagated at machine speed.

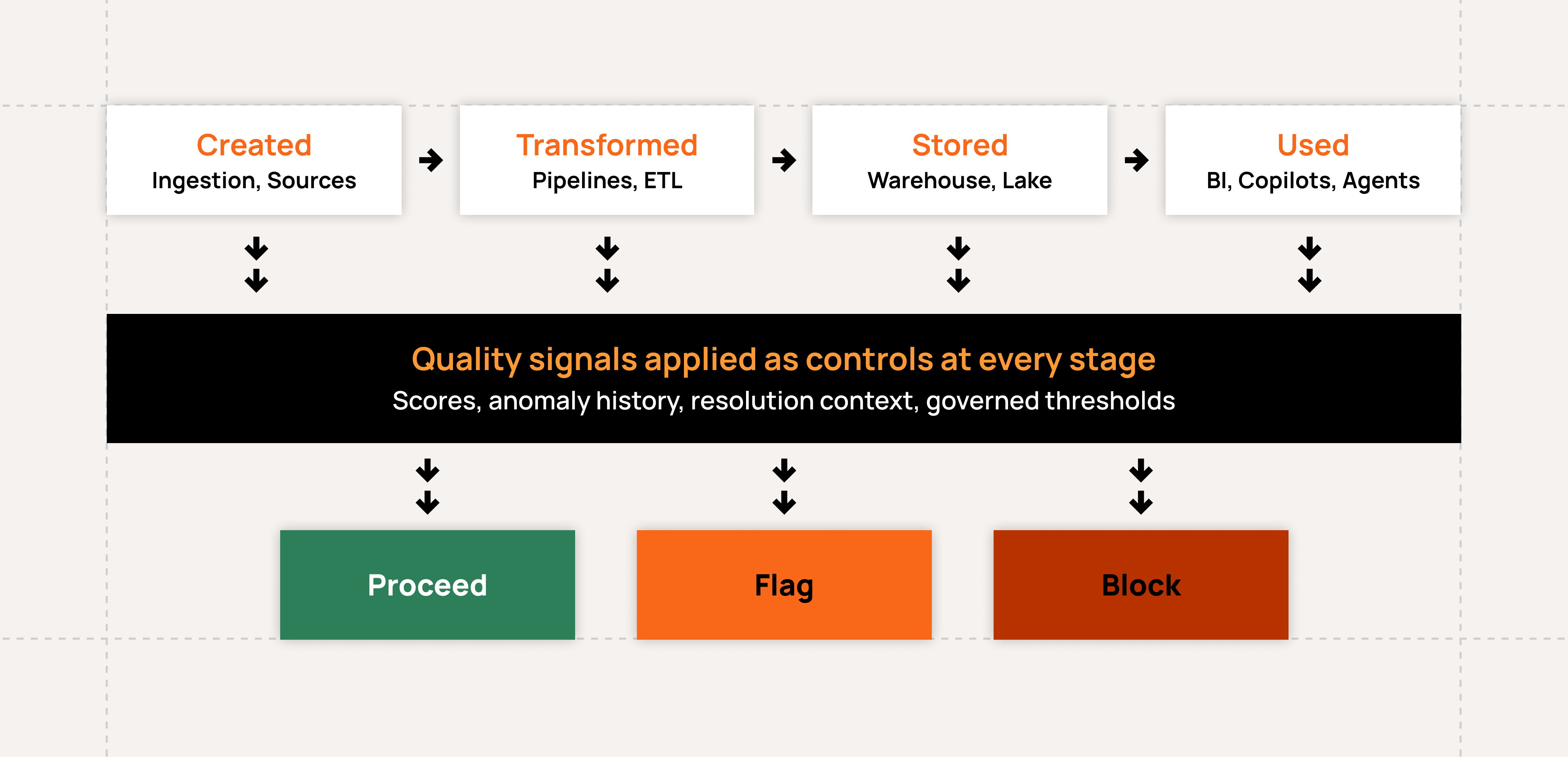

That's where data controls come in. Quality signals are where the context layer and the data control layer connect. The context layer carries and exposes them. The data control layer produces them, maintains them, and keeps them current as data evolves.

When AI is the consumer, nobody catches the error

Enterprises have been dealing with data quality problems for decades. What's changed is the cost structure when AI is acting autonomously as the consumer or on behalf of human consumers.

Before AI entered production workflows, bad data still caused real damage, albeit slower. A financial analyst reviewing a KPI might notice something looked off, or a data engineer might catch a schema change before it hits production. These weren’t reliable safeguards; they were informal, inconsistent, and generally reactive to fires as they came up. At least humans were involved and knew about some anomalies.

Copilots and agents don't work that way. They retrieve, reason, and act at production scale, continuously, without pausing to verify. When data is wrong, they proceed. Humans may be involved as consumers of confident AI outputs, or not at all with agents acting autonomously. And because these systems operate across workflows and write back to systems of record, a quality problem that would have surfaced in one report can now propagate across an entire enterprise before anyone sees it.

The cost of undetected quality issues is already significant. A global insurance company we work with quantified roughly $10 million per year in premium leakage tied to data quality issues that had gone undetected. The data was present and looked valid, but was wrong in ways that didn't surface until business impact was already measurable. That was with humans in the loop. With AI acting on that same data, the $10 million problem easily becomes a $100 million problem, and no one finds out until the damage is already in the financials.

What the context layer requires

Atlan's Enterprise Context Layer unifies lineage, semantic meaning, knowledge relationships, governance policies, and quality signals into the Enterprise Data Graph, a continuously updated foundation that AI agents can query at the moment they need to act. That’s exactly the architecture production AI requires. When an AI agent queries a data asset, it must know if the data meets the current standards the business has set for it.

Getting those standards and signals into the context layer is only half the challenge. Keeping them current is the other half.

A quality score generated last week against a dataset that receives new data daily isn't reliable context for an agent acting right now. A set of quality standards built from a handful of manually authored rules against a data source with thousands of fields doesn't represent meaningful coverage for all needed controls. For quality standards and signals in the context layer to be trustworthy, they have to be produced by a system that works continuously, comprehensively, and without requiring teams to manually maintain every rule.

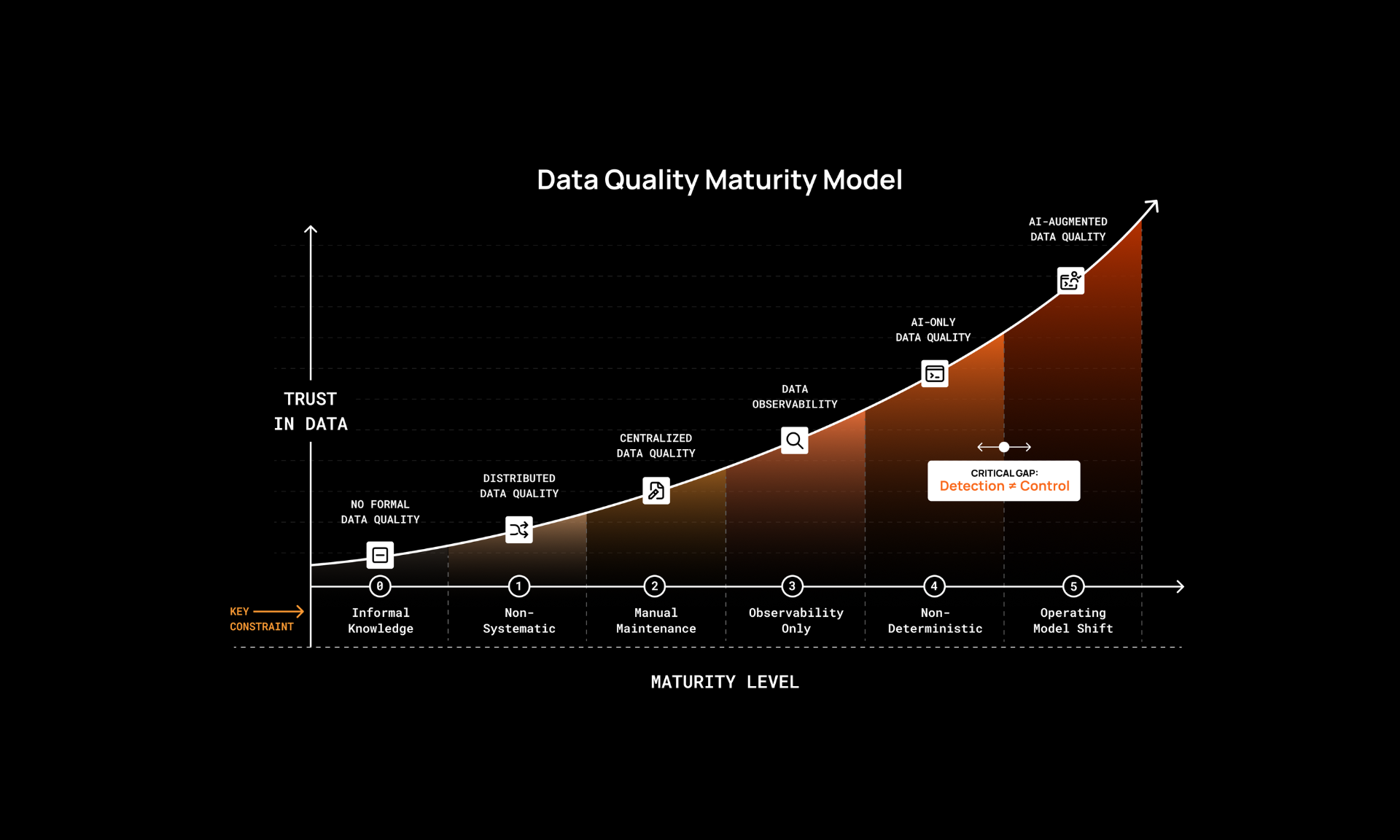

Augmented data quality to scale trusted context

At enterprise scale, you're looking at tens of thousands of quality checks across hundreds or thousands of data sources. No team can maintain that by hand. They end up falling behind with coverage always lagging. Teams that try fully autonomous systems fail differently: they get coverage with no human oversight or business context. AI can’t reliably regulate AI on its own.

What scales is augmented data quality: AI and human expertise working in tandem, not AI replacing human judgment. AI infers the majority of quality rules from the observed behavior of your data, so broad coverage starts from day one. Human experts validate intent, define complex business logic, and refine the rules that automation can't derive on its own. As data models change and upstream systems evolve, rules recalibrate continuously rather than drifting until someone notices.

That's the level of coverage and responsiveness that produces quality signals worth trusting. It's also what the data control layer is built to deliver: continuously maintained, augmented quality controls that feed directly into the context layer as reliable, current signals.

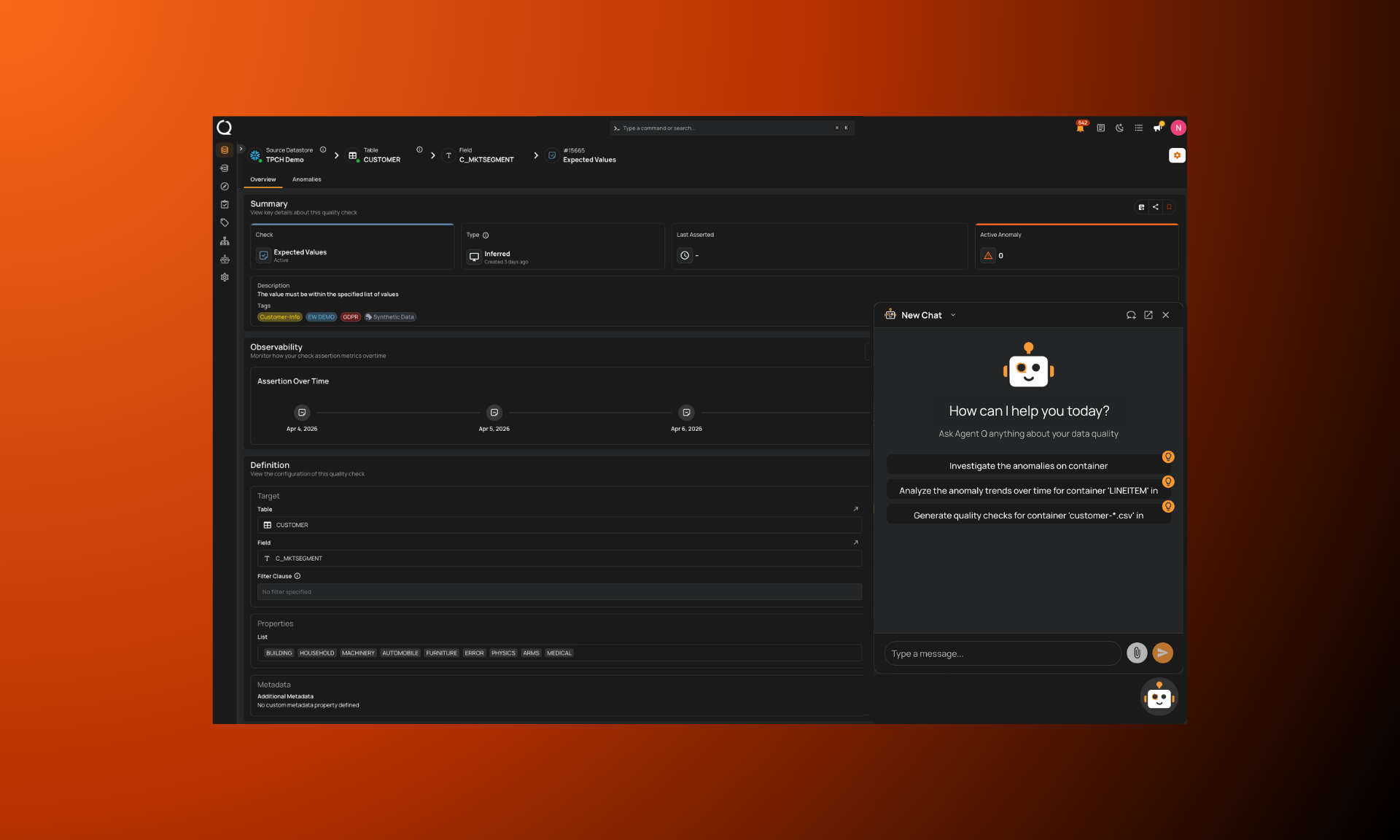

Validate-at-use, in practice

Data should be assessed at the moment it’s used, not just at predefined checkpoints. Quality signals have to travel with the data into every system that consumes it, most importantly within the context layer that AI agents query.

When Qualytics and Atlan operate together, quality standards and signals flow directly into Atlan’s Enterprise Context Layer alongside lineage, business meaning, knowledge relationships, and governance policies, giving AI agents a complete, current picture of every asset they touch. Qualytics maintains continuously updated quality signals, from rules to resolution history to quality scores, across data assets. Atlan's Enterprise Context Layer exposes those signals at point of use by AI. When a copilot or agent queries a data asset, it receives context that includes current, reliable, dynamic quality information, produced by a system that covers the data proactively rather than one that waits for a problem to surface downstream.

Atlan integrates with upstream quality tools precisely because quality signals are most valuable when they come from purpose-built systems with deep coverage. Qualytics produces and maintains quality controls at scale; Atlan carries them into the context layer. That's how production AI gets the full picture it needs to act reliably.

The infrastructure conversation is happening now

Atlan Activate is bringing together the ecosystem partners that make the Enterprise Context Layer production-ready. Data quality is one of those components, and it may be the most important and hardest to get right.

Context infrastructure without reliable quality controls will produce AI systems that are well-informed and confidently wrong. Both have to be continuously maintained. That's the foundation the next generation of enterprise AI is being built on.

You can’t trust context you can’t control.