Don't just observe your data.Control it.

Qualytics delivers quality signals as real-time controls across analytics, applications, and AI workflows. Copilots check context before generating, agents enforce thresholds before acting. This is validate-at-use.

Why validate-at-use?

Traditional data quality lives in pipeline checks, batch validations, and orchestration workflows. That doesn't go away. But predefined stages of validation are not enough when copilots are retrieving context and agents are taking action in real time. Data quality has to be available wherever data is used, delivered as controls that shape how systems behave.

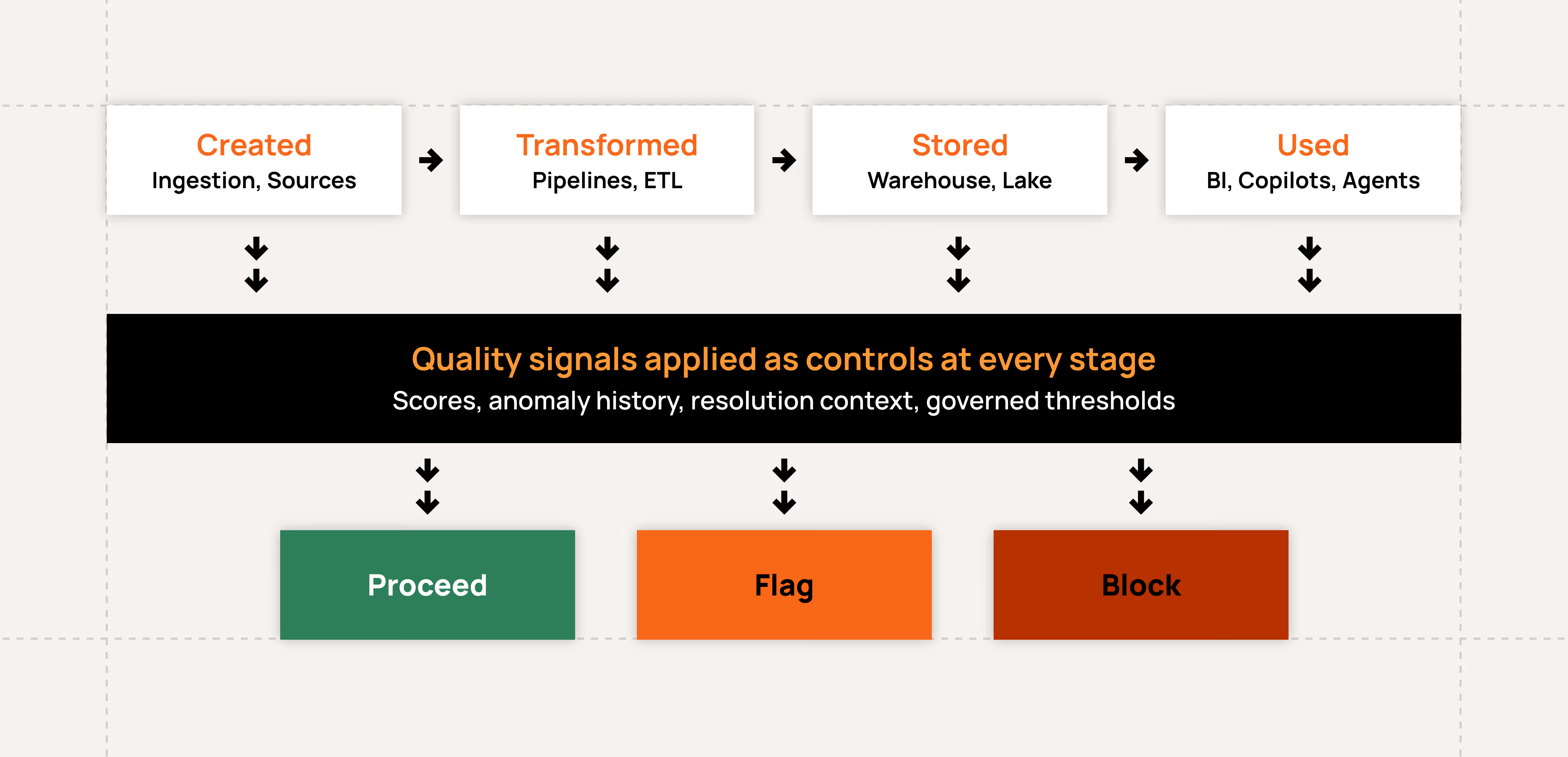

Quality Signals as Controls

Quality scores, past anomalies, resolution context, and governed rules are delivered directly into the systems that consume your data. These signals function as controls that determine whether systems should proceed, flag, or block based on governed thresholds.

Quality scores at field, container, and datastore levels

Anomaly history and resolution context attached to data at point of use

Governed thresholds that shape system behavior in real time

Controls applied continuously from creation to consumption

MCP for Copilots

MCP connects external AI systems like Claude or ChatGPT to access data quality context as part of their reasoning process. Before generating an output, a copilot can retrieve quality scores, check for active anomalies, and understand resolution history for the data it's about to use.

Quality scores and anomaly context available to copilots in real time

Resolution history surfaced as part of the reasoning process

Insights grounded in governed context, not raw data

No custom integration required

Trusted Context for Agents

Make trusted context available to any external agent through integration patterns designed for how agents actually work. Connect through the Qualytics API to pull trusted context for data under evaluation, or validate data points in real time before the agent acts. For agents that operate closer to the data, Qualytics writes enriched context directly to a database you control. Or invoke AgentQ, Qualytics's built-in expert agent, as part of your agentic workflow to bring deep data quality reasoning and context into decision logic.

Real-time validation of new data points with instant pass/fail results

Past anomaly and resolution context available at point of action

Governed thresholds enforced before agents act

Flag, block, or adapt behavior based on control outcomes

Quality Metadata for Traditional Analytics

The same quality signals that power copilot and agent integrations are delivered into your existing analytics, applications, and orchestration workflows. Pipeline checks, batch validations, and dashboard quality scores all operate on the same governed foundation.

Quality signals delivered into BI tools and dashboards

Pipeline quality gates integrated with orchestration workflows

Same signals, same thresholds across traditional and AI consumption

No separate infrastructure for different consumption patterns

The Results

Why enterprises use Qualytics for data quality

20x ROI

in year one, by automating over 20K

data-quality rules

$3.67M

in projected savings through reclaimed engineering hours

40+

integrations into the enterprise data stack

Hear from Our Customers

Frequently Asked Questions

Qualytics supports any SQL datastore and raw files on object storage. This includes modern platforms like Snowflake, Databricks, BigQuery, and Redshift, relational databases like MySQL, PostgreSQL, and Microsoft SQL Server, and file formats like CSV, XLSX, and JSON on AWS S3, Google Cloud Storage, and Azure Data Lake Storage. Qualytics also integrates with streaming data sources through our API.

Qualytics is built on Apache Spark and deployed via Kubernetes, with vertical and horizontal scalability designed for enterprise volumes. Customers run tens of thousands of rules across billions of rows across SaaS, on-prem, and hybrid environments.

No. Raw data is pulled into memory for analysis and subsequently destroyed. Anomalies and metadata are written to an enrichment datastore maintained by the customer. Highly regulated industries can deploy Qualytics within their own network where raw data never leaves their environment.

Yes. The same quality signals that power copilot and agent integrations are delivered into your existing analytics, applications, and orchestration workflows. Pipeline checks, batch validations, and dashboard quality scores all operate on the same governed foundation. Validate-at-use extends your existing quality processes. It doesn't replace them.

New data sources can be onboarded in minutes. Automated rule inference delivers broad coverage from day one. Customers like MAPFRE USA gained thousands of inferred rules in a single day, coverage that would have taken months of engineering effort to build manually.

Agents access trusted context through the Qualytics API in three ways. They can retrieve quality scores, anomalies, and resolution history for data they're evaluating. They can validate data points in real-time by passing them in-memory and running governed quality checks before acting. Or they can invoke AgentQ through its API endpoints to bring deep data quality reasoning into their decision logic without building it themselves. Agents also have the option to query the enrichment datastore directly for low-latency access to the same governed metadata. In all cases, agents inherit the same business logic and governance that human teams define.

MCP (Model Context Protocol) connects external AI systems like Claude or ChatGPT to Qualytics as part of their reasoning process. Before generating an output, a copilot can retrieve quality scores, check for active anomalies, and understand resolution history for the data it's about to use. No custom integration is required.

No. MCP and the Qualytics API operate on the same governed foundation as your existing quality rules, signals, and workflows. Any coverage you've already built in Qualytics is immediately available to copilots and agents without additional configuration.

Ready to deliver trusted data at the point of use?